Measles is back. Not because we lack a vaccine, but because we lack immunity to misinformation.

The UK recently lost its official status as a country that has eliminated measles. At least 195 children contracted measles in January and February this year, compared with 156 the previous year.

Scientists discovered a 99% effective vaccine more than 50 years ago. Yet more than one-third of five-year-olds in Enfield - the centre of one of the outbreaks - did not receive both doses of the Measles, Mumps and Rubella (MMR) vaccine in 2024/25.

The claims of a link between the MMR vaccine and autism have long since been disproven, but vaccination rates continue to decline as vaccine hesitancy persists.

The root cause is not the safety or effectiveness of vaccines. The science has not changed. But our information environment, and the conspiracy theories that flourish in it, have.

To respond effectively, we need to treat misinformation like a virus too.

When misinformation goes viral

Plenty hope their social media content will “go viral.” As it turns out, that is more than just a catchphrase. How misinformation spreads on social media closely resembles mathematical models designed to simulate the spread of pathogens.

And misinformation is reaching epidemic levels. 72% of Britons say they are worried about the spread of false information on social media according to YouGov. 56% of social media users say they have seen false or misleading news in the past year according to Ofcom. The decision by social networks to reduce fact-checking is making this fog of misinformation denser still.

This content is of course perfectly legal. It is not illegal to tell a lie, and neither should it be. But legality is not the same as harmlessness. When false claims erode vaccine uptake, the consequences are measured in hospital admissions.

So how should we as communications leaders respond?

Traditional rebuttal techniques are insufficient and can even be counter-productive. Disinformation travels faster online than it can be disproven. Rebuttal often ends up amplifying false content rather than shutting it down. As people refute the content, they repeat it and algorithms optimised for engagement then give it a new wave of visibility.

In a low-trust environment, rebuttals from a pharmaceutical company or government agency are often dismissed with: “Well, that’s what they would say, wouldn’t they?” Whereas information provided by family or friends is regarded warmly - “What reason would they have to lie?”.

As RAND has put it “don’t expect to counter the firehose of falsehood with the squirt gun of truth.”

Government and business need a more proactive approach to tackling information threats. We need to reduce the spread of false information before it takes hold, rather than merely debunking it afterwards.

Pre-bunking

‘Pre-bunking’ may be part of the solution. If the way misinformation spreads is similar to how diseases spread, then perhaps our response should be too.

Like a pathogen, misinformation spreads through contact. The more people share it, and the more susceptible people are to believing it, the faster it spreads.

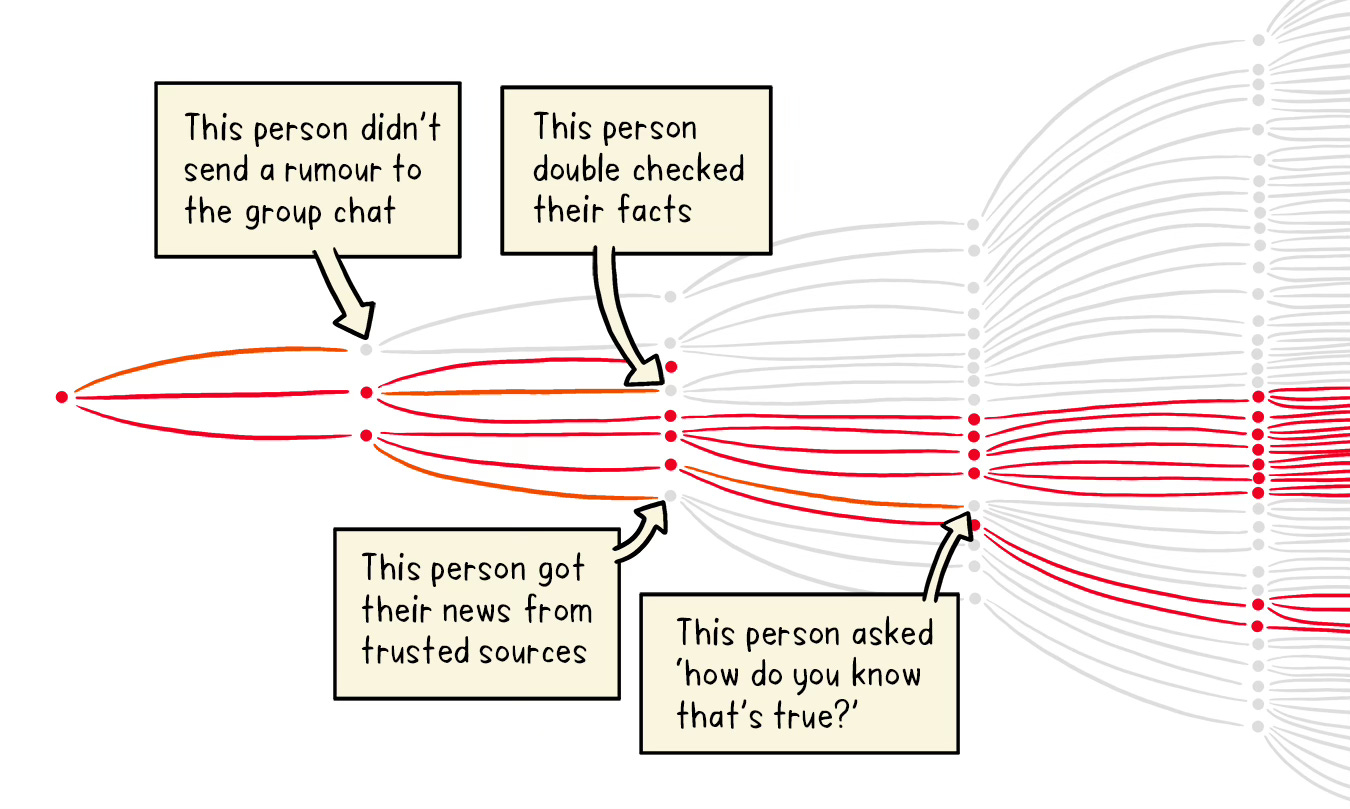

You probably remember from the COVID-19 pandemic that breaking a few links in the chain can have a dramatic impact on the number of infections. So it is with misinformation. Persuading just a few people to think twice before sharing information can have a dramatic impact on the spread.

Pre-bunking reduces the likelihood that people share misinformation and makes them less susceptible to misinformation when they encounter it.

It does so by exposing people to common disinformation techniques so they are better able to identify and resist manipulative content when they see it. For example, by warning people that bad actors often use emotionally charged language or quote “experts” with impressive-sounding but irrelevant credentials.

It provides a layer of protection before individuals encounter malicious content.

How to pre-bunk: a five-step guide

Pre-bunking can be a practical communications technique, rather than an abstract academic theory. Here’s a five-step guide on how to pre-bunk:

The first step to monitor the information environment properly. You need to know which narratives are circulating, where they are gaining traction, who is amplifying them and which audiences are most exposed. That does not require an intelligence agency but it does require more than media monitoring in the old sense.

The goal, and the difference, is to spot patterns early enough to act. The key questions to ask of any monitoring tool are: Does it improve my preparedness to respond? Will it buy me extra time by giving me early warning? Will I be able to use the information to do something proactive in response? Analysis and insight are only of value if they can be applied. It is easy to disappear down a rabbit-hole of ever deeper analysis without yielding insight that is actionable.

The second step is to identify methods of manipulation rather than chasing individual falsehoods. In vaccine debates, the exact claims change, but the methods are familiar: emotional language, false experts, conspiracy framing, false balance and cherry-picked anecdotes. This is where pre-bunking works best because it builds people’s understanding of how they may be misled, not just what to think about one specific claim.

The third step is to design messages people can absorb quickly. Effective pre-bunks have three parts: a warning that misleading content may be coming, a simple explanation of the tactic being used, and a safe example that helps audiences recognise the pattern without reinforcing a falsehood.

In practice, pre-bunking can be general or specific. You can either teach audiences the manipulation techniques they are likely to encounter, or warn them in advance about a particular false claim you expect to emerge. In some cases, it may also be worth flagging channels or actors with a track record of spreading falsehoods.

The fourth step is to choose the right messenger. In a low-trust environment, you may not be the most persuasive voice. In most cases, a nurse or clinician will be a more trusted voice on vaccines than a government minister or corporate CEO. Who is most likely to gain your audience’s attention and trust?

The final step is to test, refine and repeat. Ideally you should evaluate success on two levels. First, whether the communications worked by tracking your audiences’ ability to identify misinformation and their willingness to share it. And second, whether your response system has improved. Are you identifying and responding to threats more quickly?

Does pre-bunking work?

A 2022 study led by Jon Rozenbeek and Sander van Der Linden found that the share of individuals who could correctly identify a manipulation technique increased by 5 percentage points on average after viewing a pre-bunking video. So it’s not a silver bullet but there is evidence it has a material effect. Here’s a short excerpt from the abstract to that study:

“We developed five short videos that inoculate people against manipulation techniques commonly used in misinformation: emotionally manipulative language, incoherence, false dichotomies, scapegoating, and ad hominem attacks. … We find that these videos improve manipulation technique recognition, boost confidence in spotting these techniques, increase people’s ability to discern trustworthy from untrustworthy content, and improve the quality of their sharing decisions.”

A 2024 initiative by Moonshot, Google and Jigsaw claimed to have used similar pre-bunking techniques to help at least 1.5 million viewers recognise manipulation techniques in advance of European elections.

When I was at the Home Office we worked with financial services companies on similar preventative techniques to prevent fraud. Campaigns such as ‘Take Five’ and ‘Stop! Think Fraud’ were aimed at shaping consumer behaviour and building resilience to scams. UK Finance claimed a 14% fall in impersonation scam losses in the first half of 2025 was driven in part by education campaigns like this.

However, there is much less evidence of companies in other sectors using the same kind of pre-bunking techniques to defend their own brand or reputation against disinformation.

That feels like a missed opportunity.

Conclusion

Pre-bunking gives you a way of acting proactively, right now, to inoculate people against the types of mis- or disinformation they might see about your organisation. It measurably improves people’s ability to spot misinformation and reduces their propensity to share it.

We vaccinate against measles before exposure because prevention works better than cure.

In an age of viral misinformation, communications leaders should be applying the same logic to their information environment.

If we wait to rebut every false claim after it spreads, we will always be behind the curve.

Deep cuts

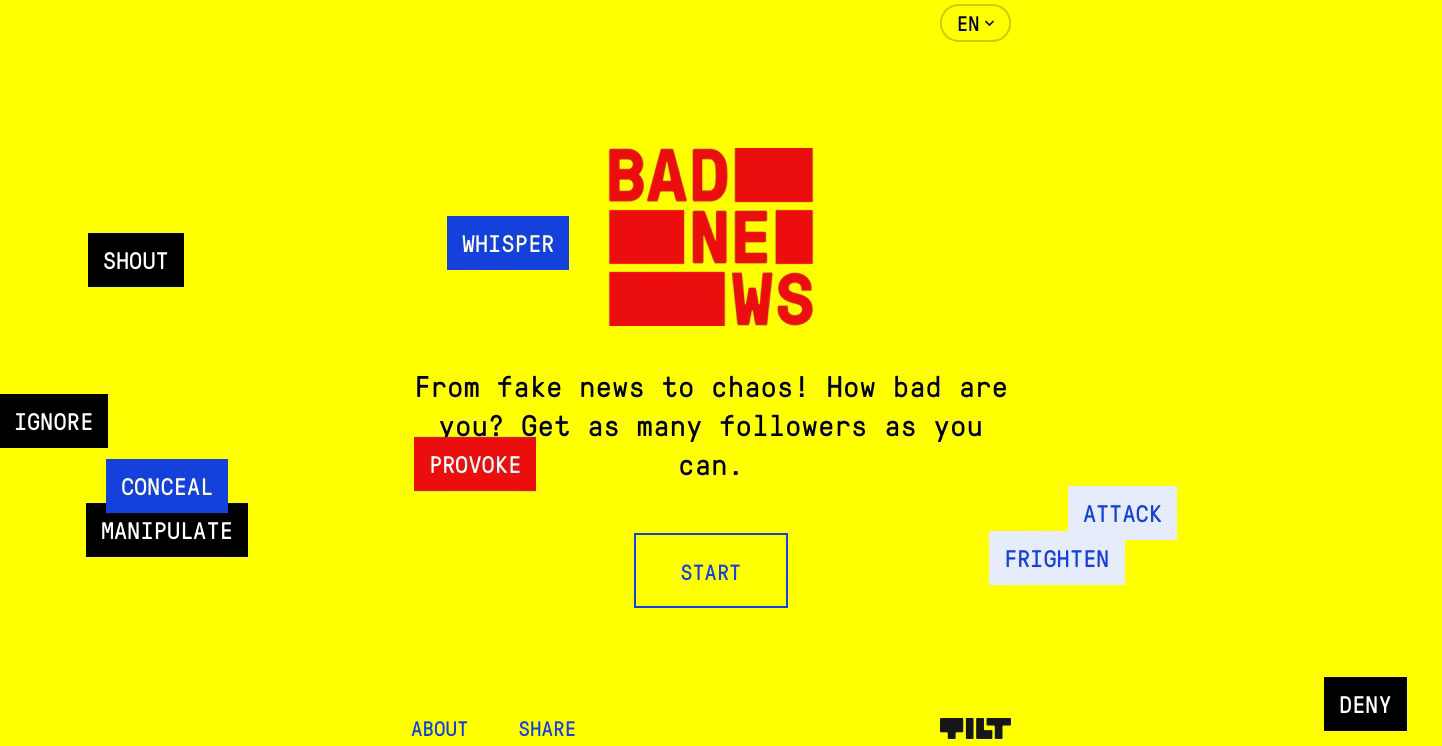

If you’re interested in exploring pre-bunking in more detail, then I’d recommend two practical guides:

A practical guide to pre-bunking misinformation, which was a collaboration by Google/Jigsaw, Cambridge University and BBC Media Action.

The Government Communication Service RESIST v3 framework developed by Dr James Pamment.

…and one book:

Foolproof. Why we fall for misinformation and how to build immunity. By Professor Sander van Der Linden of Cambridge University.

Definitions:

Misinformation (a mistake). False information shared without intent to mislead.

Disinformation (a lie). False information that the originator knew to be untrue and shared with the intention of deceiving.